AI Safety in The Age of Autonomy

The internet's trust infrastructure was built to verify humans. It was never built to verify what humans authorize. That gap is now the most exploited vulnerability in digital security.

For most of its history, AI safety meant alignment research. The concern was reasonable and the work was serious, but it also had the convenient property of feeling far away.

That distance collapsed somewhere around mid-2025.

The field’s most urgent problems are no longer speculative. They are operational, measurable, and costing real money right now. The question is no longer what a misaligned superintelligence might do in 2040. The question is what a perfectly functioning AI agent, operating exactly as designed, is doing today with credentials it was handed once and never asked to justify again.

The Verification Stack Was Built for the Wrong Era

Every major digital trust system in production today was designed to answer a single question: is this a real person?

KYC only confirms someone’s identity at onboarding. CAPTCHA attempts to distinguish a human from a script at a single interaction point. Multi-factor authentication adds friction to credential use. All of them operate on the same assumption: verify the human once, then trust the session.

That assumption worked when humans were the only actors. Humans are slow most of the time. This imposed natural rate limits on how much damage a compromised session could do.

In contrast, AI agents aren’t constrained by the same speed limits as humans. A single agent operating with a legitimately issued credential, within its authorized scope, can execute in an hour what a team of twelve would take a week to process. This new level of speed is what makes the old verification model structurally inadequate.

The security industry spent years improving authentication, then handed the session to a machine.

The Numbers Are Already Concerning

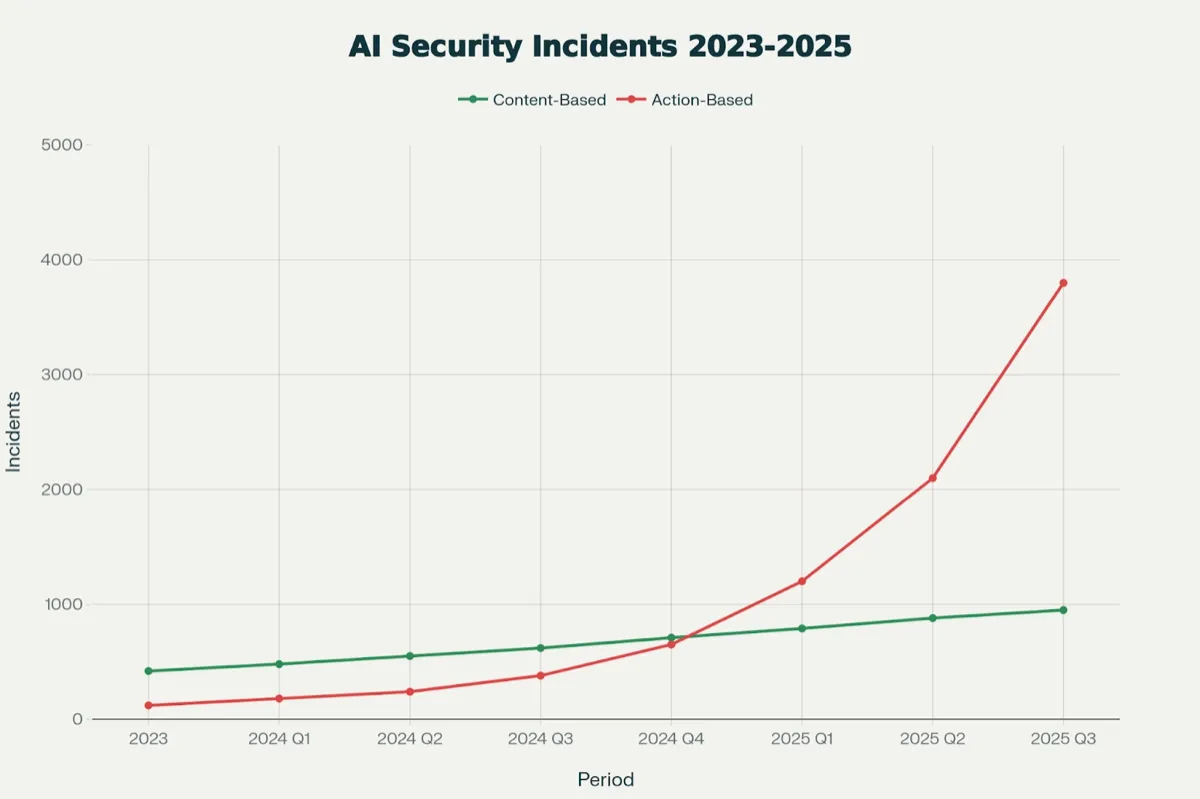

The data from 2025 and early 2026 make the scale of the problem difficult to ignore.

One in eight enterprise security incidents now involves an agentic system as either the primary target, a contributing attack vector, or an amplifier of breach impact. Agent-involved incidents grew 340% year over year between 2024 and 2025. In financial services and healthcare, the ratio is closer to one in five.

Shadow AI breaches cost an average of $4.63 million per incident, roughly $670,000 more than a standard breach. The gap is driven by delayed detection and difficulty scoping the exposure. Shadow AI breaches took an average of 247 days to detect. 97% of organizations that experienced AI-related breaches lacked basic access controls for the agent systems involved.

Deepfake-enabled fraud compounded the damage. Losses from deepfake-related fraud and scams reached $1.1 billion in 2025, tripling from $360 million in 2024.

Source: stellarcyber.ai

Source: stellarcyber.ai

Gartner predicted that by 2026, 30% of enterprises would no longer consider standalone identity verification and authentication solutions reliable in isolation. That prediction appears conservative.

The Gap Between Identity and Authorization

The root problem is architectural, not operational. The entire identity verification stack answers the wrong question.

Identity verification tells you who someone is. Authorization tells you what they have sanctioned, right now, in this context. These are fundamentally different concepts, and the gap between them is exactly where every significant agent-related incident has occurred.

A KYC check performed at onboarding says little about whether the human behind an agent session is still aware of, or has explicitly approved, the actions that agent is currently taking. Liveness detection performed at login cannot understand what the agent does for the next six hours while the human sleeps. A credential issued once, at the beginning of a session, carries no information about ongoing consent.

The underlying model is: verify identity once, grant credential, trust indefinitely.

This worked when the thing holding the credential was a person who had limits. It does not work when the thing holding the credential is software that runs at the speed of compute, around the clock.

The post-breach pattern has become familiar enough to describe as a template: a legitimately verified credential, a delegated agent session, and the complete absence of any mechanism to confirm ongoing human authorization. No zero-days required. No novel exploits. Just a structural gap in the trust architecture that nobody had incentive to close until agents made it catastrophically exploitable.

CAPTCHA Is Dead. What Replaces It?

CAPTCHA was actually designed to answer the question is this a human? by testing for human-like behavior. The problem is straightforward: we spent a decade training machines to behave like humans. The test was obsolete the moment the machines got good at their jobs.

Modern anti-bot systems have evolved into behavioral analysis engines, evaluating mouse movements, device fingerprints, and network latency. But even these upgraded systems test for the wrong thing. They test whether the interaction pattern looks human. They do not test whether a human actually authorized the interaction.

The distinction matters enormously in an agentic context. An AI agent browsing a website, filling a form, or initiating a transaction can produce behavioral patterns indistinguishable from a human user. That is not a bug in the agent. That is its core design feature. Penalizing agents for behaving like humans penalizes exactly the capability that makes them useful.

The replacement for CAPTCHA is not a better CAPTCHA. It is a fundamentally different primitive: cryptographic proof of human authorization at the action level, not at the session level.

What “Know Your Agent” Actually Requires

The concept of KYA, Know Your Agent, began appearing in regulatory frameworks and enterprise security guidance through 2025 and 2026. The World Economic Forum published a formal specification in January 2026 outlining four requirements: establishing what an agent is, confirming what it is permitted to do, maintaining accountability for every action, and continuously monitoring behavior.

The frameworks are directionally correct. What they lack is a credible answer to the implementation question: what biometric signal or verification method can actually serve as the root of trust for real-time, per-action human authorization?

The requirements for that signal are brutally specific. It must be accurate enough to resist false acceptance at scale. It must be resistant to remote spoofing and deepfake synthesis. It must not require specialized hardware beyond what most people already carry. It must be fast enough for per-action use rather than periodic ceremonies. And it must be privacy-preserving, generating proof of authorization without creating a surveillance trail.

Most existing modalities fail at least one of these criteria in ways that are disqualifying for the agentic use case.

Face biometrics are publicly available at scale, trivially harvested from social media, and increasingly unreliable against deepfake generation. Iris scanning requires specialized hardware and in-person visits, making it a non-starter for real-time authorization. Fingerprints have been defeated already by synthesis tools. PINs and passwords are fundamentally incompatible with the concept of per-action signing, as they introduce the exact kind of friction that agents are deployed to eliminate.

An emerging alternative is palm biometrics. Unlike other methods, palms are harder to harvest, more resistant to deepfakes, and can be verified accurately using standard smartphone cameras—making them a strong fit for real-time, privacy-preserving authorization.

In this model, the biometric proves a real human is actively authorizing each action—pointing to a viable long-term solution for KYA.

The Four-Layer Stack

The organizations that have begun implementing real KYA infrastructure share a common architecture with four layers.

Human anchor. A biometric verification establishes a unique human identity without storing raw biometric data. This layer answers: is there a real, unique human behind this session?

Agent registration. The agent is issued a verifiable credential, cryptographically bound to the verified human principal. The credential carries scope: what the agent can do, with whom, for how long. This layer answers: what is this agent, and who owns it?

Per-action authorization. For high-stakes actions, the agent triggers a real-time human co-signature. A biometric scan on the user’s device generates an on-chain attestation. Frictionless enough for daily use. Unforgeable enough to satisfy regulators. This layer answers: did the human authorize this specific action, right now?

Continuous audit. An immutable log of which agent actions carried human co-signatures and which operated autonomously. The log proves authorization without revealing the underlying biometric. This layer answers: who is accountable for what?

The financial stakes for this infrastructure are not abstract. Agentic commerce, where agents negotiate, purchase, and transact on behalf of users, is already mainstream. OpenAI’s Agentic Commerce Protocol, Mastercard’s agent payment rails, Walmart’s in-chat commerce integrations, all represent trillions of dollars in potential transaction value flowing through agent sessions. Without a human authorization layer, every one of those transactions is a potential liability event.

The Compounding Problem

The crises are not isolated. They compound.

Social platforms like X, for example, are flooded with AI-generated content and have degraded public trust in digital media. Deepfakes of executives are authorizing transactions, synthetic voices instructing fund transfers, agent-generated communications indistinguishable from human-written ones. Collectively, they produce an epistemic crisis in digital communication where nothing can be taken at face value and no signal is reliable.

The pattern repeats in every domain where digital identity intersects with value transfer: if you cannot prove that a human authorized an action, the action is exploitable at machine speed.

The Window

The infrastructure to solve this problem already exists: solutions like palm biometrics and verifiable credential standards. None of these are theoretical. They are deployed, tested, and operational.

What does not yet exist is consensus. The KYA standard is being defined right now, in real time, across regulatory bodies, enterprise security frameworks, and protocol governance structures. The companies, regulators, and infrastructure providers that participate in defining it will determine which biometric modality becomes the trust anchor for the next decade of digital infrastructure.

This article was written by the research team at VeryAI, a company building the identity layer for AI safety. Learn more at very.org.

Ready to build with VeryAI?

Integrate Proof of Reality into your platform today.